Floating point numbers are utilized in most calculations performed in Matlab and other programming languages. Often misunderstood, floating-point arithmetic can cause many confounding problems in addition, subtraction, multiplication, division, comparison, and other types of calculations. In this series of posts, I would like to describe the basics of floating point numbers that conform to IEEE Standard 754 , introduce several Matlab functions that provide information about floating point numbers, provide a pair of functions that convert between the decimal and binary floating point representations, present some examples of how to view floating point numbers in different formats, and demonstrate how to handle some common problems with their arithmetic. In this post, I will give a brief overview of floating point numbers, introduce several Matlab functions that handle floats, and delve into detail of one of these functions named eps.

Basics of floating point numbers

The goal of the floating point number scheme is to represent real numbers in a fixed amount of memory in a way that maximizes the range of encodable numbers while maintaining a high degree of precision. A floating point number is the equivalent of scientific notation in a computer. Thus, a floating point number with base β, precision p, and exponent e can be represented as follows:

d0 . d1 d2 … d(p-1) × β^-e

Digit d0 is the leading digit, followed by digits d1 to d(p-1), which are the less significant digits. For example, a decimal number (base 10) with a precision of 4 is shown here:

7.201 × 10^5

The digits in the first part of the floating point number are collectively called the significand or the mantissa. To encode decimal numbers into a binary format, the numbers of bits used for the sign, exponent, and significand must be specified.

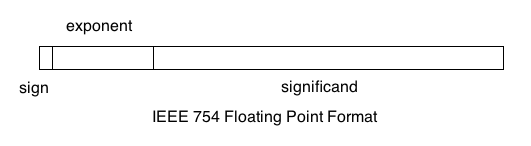

Matlab uses IEEE Standard 754, the most common standard, to construct floating point numbers in both single- and double-precision. For a single-precision number, this standard specifies one sign bit, 8 exponent bits, and 23 significand bits, for a total of 32 bits or 4 bytes. For a double-precision number (Matlab’s default numerical data type), this standard specifies one sign bit, 11 exponent bits, and 52 significand bits, for a total of 64 bits or 8 bytes. The leading digit in this format is assumed to be 1, which allows the encoding of numbers with 24 and 53 significant binary digits for single- and double-precision floats, respectively. In order to represent both negative and positive exponents, the exponents of single-precision (single) and double-precision (double) floating point numbers are biased by 127 and 1023, respectively. The following diagram displays the structure of an IEEE 754 floating point number. The sign bit is on the left, followed the exponent, and the significand. The least significant digits in the exponent and significand are located in the rightmost bits.

Matlab functions handling floats

Matlab includes some basic functions that provide information about its floating point number scheme. Functions realmin and realmax display the minimum and maximum positive normalized numbers, respectively, that can be represented in Matlab doubles. To find these values for singles, include “single” as a parameter to realmin or realmax. For doubles, the values of realmin and realmax correspond to 2^-1022 and (2-2^-52)^1023 in binary, respectively.

>> format long

>> realmin

ans = 2.22507385850720e-308

>> realmax

ans = 1.79769313486232e+308

>> realmin("single")

ans = 1.17549435082229e-38

>> realmax("single")

ans = 3.40282346638529e+38

In IEEE Standard 754, there are several types of numbers that have special binary representations: normal numbers, subnormal numbers, signed zeros, infinities, and NaNs. Normal numbers can be represented with an assumed leading 1 in the significand. These include all the numbers between realmin and realmax, inclusively. Additionally, there are subnormal numbers (also called “denormal” numbers), which have leading zeros in the significand. A subnormal number is the equivalent of 0.0123 × 10^-2 in scientific notation, for example. These numbers are allowed in IEEE Standard 754 to fill the “underflow gap” between the smallest normal number and zero. The smallest subnormal number can be obtained with the eps function in Matlab. This function returns the spacing between its argument and the next highest floating point number. Its default argument is 1. This function effectively computes the value of the “unit in the last place” (ulps) for a floating point number, a quantity that is often mentioned in literature relating to floating point numbers.

>> eps ans = 2.22044604925031e-16 >> eps(0) ans = 4.94065645841247e-324

The value of eps(0) is equal to 2^-1074, which is the absolute lowest positive value that can be represented as a 64-bit floating point number in Matlab, in accordance with IEEE Standard 754. The smallest positive value for singles is 2^-149. The value that eps returns increases with the second power of the floor of its argument minus the smallest value that the significand can assume, which produces a step-wise function:

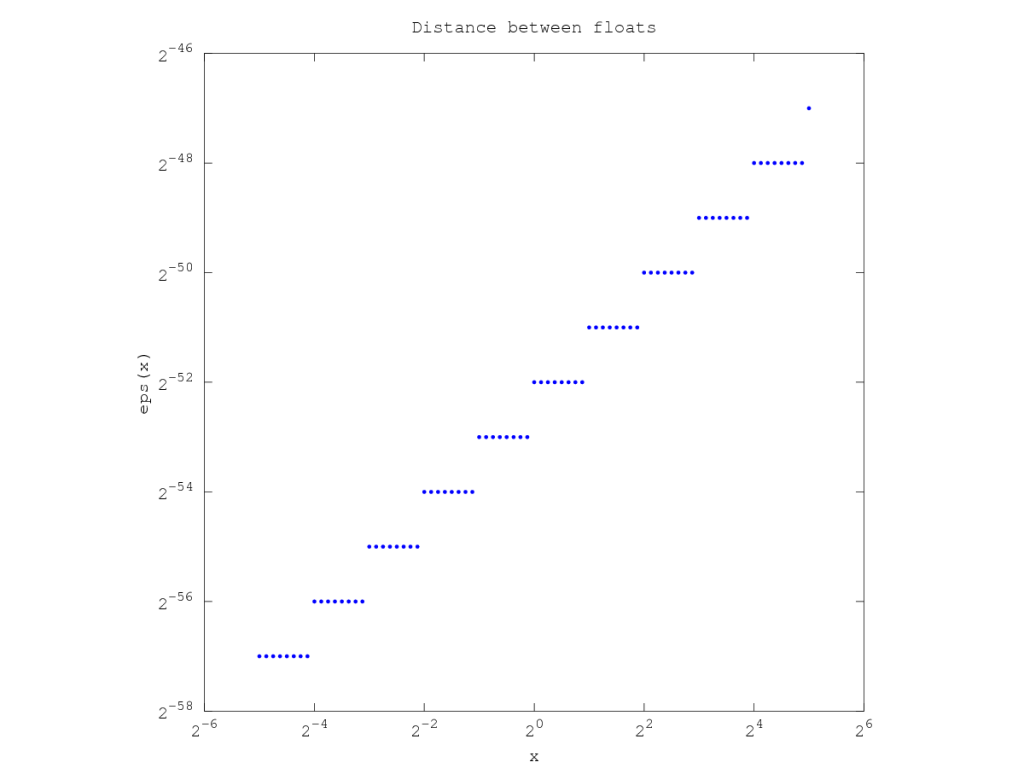

eps(x) = 2^(floor(x)-52), for x = [realmin, realmax]

eps(x) = 2^-1074, for x < realmin

As shown in the figure below, the log-log plot of eps(x) vs. x is a step-wise function, and its y-intercept is at eps(1) = 2^-52. The function is step-wise because as the floating point numbers increase in magnitude, their exponent increases every power of 2. The smallest increment, eps, is equal to the number corresponding to the least significant digit in the significand multiplied by the exponent. As the magnitude of a float increases, it must be represented by a larger exponent, which places a lower limit on the value of least significant digit in the significand. This has direct consequences for the sparseness of floating point numbers. Because there are 52 bits in the significand, there are 2^52 numbers for each exponent. Consequently, for every range of numbers between adjacent powers of 2, there are an equal number of floats, and floating point numbers become more sparse as they increase in magnitude.

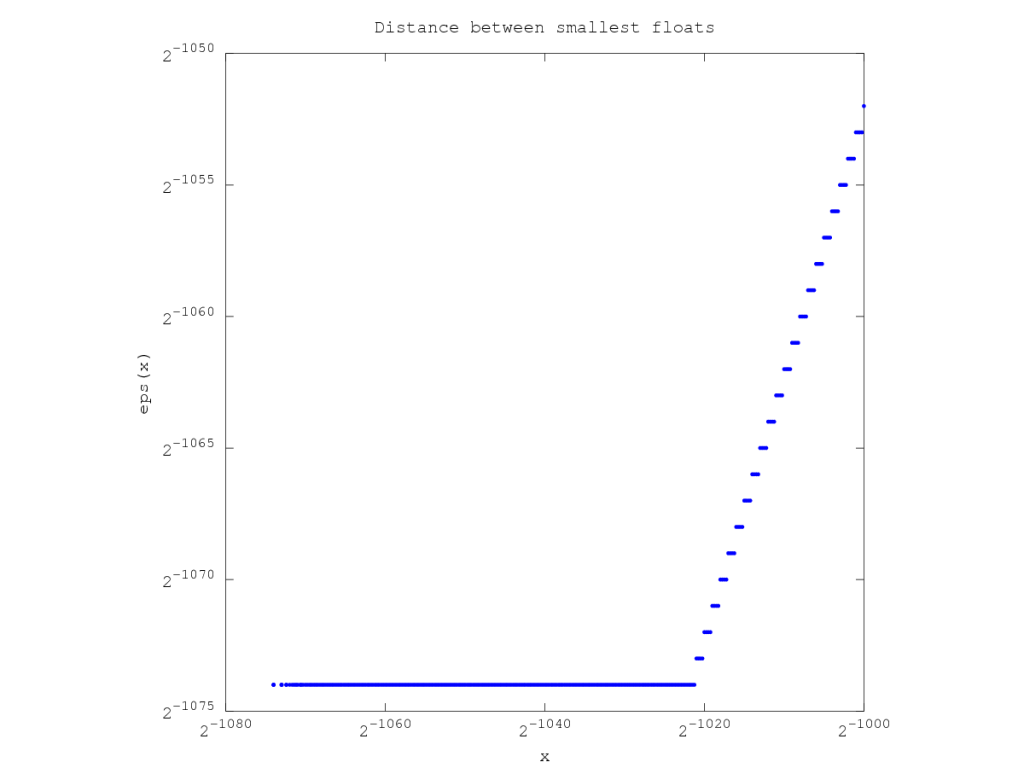

As shown in the next figure, the spacing of the floats becomes constant from 2^-1074 to 2^-1023 when the exponent reaches its minimum value (-1022), and consequently, the difference between every value is the last digit of the significand (2^-52) multiplied by 2 to the power of the minimum exponent (2^-1022), which equals 2^-1074.

Special floating point numbers also include signed zeros, infinities, and NaNs. Signed zeros are values of zero (-0 and +0) that have all of their exponent and significand bits set to zero but have different sign bits. This small difference in sign has ramifications in calculations performed near zero or infinity, as well as in complex arithmetic. Positive and negative infinity can also be represented as floating point numbers, which is useful for differentiating between the results of calculations such as 1/0 and -1/0. The use of infinity avoids an overflow error, which increases program stability. NaN (not a number) is another value that was developed to increase program stability and accommodate undefined results. NaN is produced by the following calculations: ∞ – ∞, 0 × ∞, 0/0, ∞/∞, rem(∞,n), rem(n,∞), and any calculation involving NaN. It is important to remember that whereas infinities are equivalent (Inf = Inf), NaNs are not (NaN != NaN). Additionally, there are two types of NaNs, “quiet” and “signalling”, which behave differently during computations. Quiet NaNs (QNaNs) allow a computation to finish and merely record that the results of the operation are undefined, whereas signalling NaNs (SNaNs) are used to raise exceptions and halt program flow.

In this post, I gave a brief overview of floating point numbers, introduced several Matlab functions that handle floats, and delved into detail of eps, the function that returns the distance to the next floating point number. In future posts, I will provide some functions that convert between the decimal and binary floating point representations, present some examples that show how to view floating point numbers in different formats, and demonstrate how to avoid some common problems in floating point arithmetic.

I want to write a code to determine the largest number that matlab can handle on my computer , and not use any of the built in functions. Any help would be appreciated.

Thank you

Having read this I believed it was very enlightening.

I appreciate you spending some time and energy to put this information together.

I once again find myself spending a lot of time both reading and commenting.

But so what, it was still worthwhile!

Sincerely,